I hinted at file growths in my previous posts about shrinking data and log files. Then I talked about growing log files in my post Database Log VLFs. However, there are still some unanswered questions about growing data files.

Should I use autogrowth? What should I set it to grow by?

Why do manual growths, and how do I know how much to grow by or when a growth is needed?

Can I and should I use Instant File Initialization? If I can and should, how do I even know if it’s turned on?

Leave Autogrowth On?

Yes, absolutely. This isn’t a question.

This could be worded as “Do you want a slight pause or do you want your application to fail?” The answer is a little more obvious when you word it that way. The only time I’ve seen an appropriate answer of “No” is when you have multiple data files in a filegroup and only want one to grow.

To answer how long this pause will be you have to ask these two questions. Are you able to use Instant File Initialization? How much are you growing by if you can’t use Instant File Initialization? I’ll discuss Instant File Initialization later in this post, but it’s basically the difference between “this space is yours immediately” instead of writing zeros SQL Server doesn’t care about to be paranoid about security.

There are competing factors as to how much you want to autogrowth to be. The only real consensus is that the defaults are horrible. You need to balance out how long of a pause it will take for a growth, how long of a pause your users will tolerate, how fast is your database growing, how much fragmentation smaller growths will cause, and how much fragmentation you will tolerate.

By default, data files grow at 1 MB and log files grow at 10%. I covered log file growths rather well in my post Database Log VLFs, so we’ll focus on the data files here. Think about it, if you have a 100 GB database that got there by the defaults, it got there in 102,400 pieces. Not good.

If the defaults are horrible, what’s good? Tiny is bad, huge is bad, and percentage-based growth just lets you automatically drift from tiny to huge. Do your balancing act I talked about above. If growing by 64 MB will keep you good for several weeks, set it to 64 MB. If 1 GB goes by rather quickly, you can’t use Instant File Initialization, and the users can tolerate the pause it takes to grow by 1 GB, then this might be the best growth rate for you. No single answer is right, but at least you have a better idea of what the variables are.

There are also cases where there are no good answers. Say you have an app wouldn’t be uncommon to grow by a couple GB a day, you can’t use Instant File Initialization, and pauses of more than a second wouldn’t go over very well. You don’t want the fragmentation of setting the growth to 64 MB, but you don’t want the pause of setting it to 1 GB. Maybe you’ll settle for 256 MB, but you’re not happy about it. Manual growths are the real answer, but you want a safety net that won’t kill you with pauses or fragmentation. Not everyone is in that situation, but some of us are.

If I’m going to suggest leaving Autogrowth on and suggest that you be aware of the size of these growths, then the least I can do is give you a script for that. This will tell you the size of every database file and the autogrowth rate. I’ll often use it to tweak the growth rates, so I script that change out in the results as well. If you read the post Database Log VLFs then it will make more sense why I use 1,000 MB here instead of 1,024 MB; it wasn’t a typo.

DECLARE @FilterByDB VarChar(100) , @Cmd NVarChar(4000) SELECT @FilterByDB = '' --SELECT @FilterByDB = 'tempdb' IF Object_ID('TempDB..##FileSize') IS NOT NULL BEGIN DROP TABLE ##FileSize END CREATE TABLE ##FileSize ( DB VarChar(128) , FileLogicalName VarChar(128) , FilePhysicalName VarChar(512) , FileGroup VarChar(128) , Used_MB VarChar(128) , Free_MB VarChar(128) , Size_MB VarChar(128) , GrowthRate VarChar(128) , MaxSize VarChar(128) ) SELECT @Cmd = N'use [?]; IF ''?'' like ''' + @FilterByDB + '%'' OR ''' + @FilterByDB + ''' = '''' BEGIN INSERT INTO ##FileSize SELECT DB = db_name() , FileLogicalName = f.name , FilePhysicalName = f.physical_name , FileGroup = ISNULL(g.name, f.Type_Desc) , Used_MB = REPLACE(CONVERT(varchar(100), (CAST((FileProperty(replace(f.name, '''', ''''''''), ''SpaceUsed'')) / 128 AS money)), 1), ''.00'', '''') , Free_MB = REPLACE(CONVERT(varchar(100), (CAST((f.size - FileProperty(f.name, ''SpaceUsed'')) / 128 AS money)), 1), ''.00'', '''') , Size_MB = REPLACE(CONVERT(varchar(100), (CAST((f.size) / 128 AS money)), 1), ''.00'', '''') , GrowthRate = Case Is_Percent_Growth WHEN 1 THEN '''' + Cast(Growth as VarChar(100)) + ''%'' ELSE REPLACE(CONVERT(varchar(100), (CAST(Growth/128 AS money)), 1), ''.00'', '''') + '' MB'' END , MaxSize = Case Max_Size WHEN -1 THEN ''---'' WHEN 268435456 THEN ''---'' ELSE REPLACE(CONVERT(varchar(100), (CAST(Max_Size/128 AS money)), 1), ''.00'', '''') + '' MB'' END FROM sys.database_files f LEFT JOIN sys.filegroups g on f.data_space_id = g.data_space_id END ' IF @FilterByDB <> '' AND 100 < (SELECT COUNT(1) FROM sys.databases) BEGIN SELECT @Cmd = REPLACE(@Cmd, '?', @FilterByDB) EXEC (@Cmd) END ELSE BEGIN exec sp_MSforeachdb @Cmd END SELECT * , PctUsed = Cast(100 * replace(Used_MB, ',', '') / (Cast(replace(Size_MB,',', '') as Dec(20,2)) + .01) as Dec(20,2)) , SetGrowth64MB = 'ALTER DATABASE [' + db + '] MODIFY FILE ( NAME = N''' + FileLogicalName + ''', /*Size = MB,*/ FILEGROWTH = 64MB )' , SetGrowthTo256MB = 'ALTER DATABASE [' + db + '] MODIFY FILE ( NAME = N''' + FileLogicalName + ''', /*Size = MB,*/ FILEGROWTH = 256MB )' , SetGrowthTo1000MB = 'ALTER DATABASE [' + db + '] MODIFY FILE ( NAME = N''' + FileLogicalName + ''', /*Size = MB,*/ FILEGROWTH = 1000MB )' FROM ##FileSize ORDER BY 1, 4, /*cast(replace(size_mb, ',', '') as int) DESC,*/ FilePhysicalName DROP TABLE ##FileSize

Manual Growths

If you want to be extreme about it, autogrowth is like asking someone about wearing a seatbelt. Do you want it to hurt a little or a lot if you “crash” by running out of allocated space? Well, you want it to hurt…wait…why do I have to crash?

This may seem over-the-top, but some of the applications may have databases like the last one I talked about in the autogrowth section. Typically we’d prefer not to have autogrowth handling everything to avoid the pause while it grows, but there are cases like that where it’s more than a slight preference to avoid the pause.

So the autogrowths are our seatbelts we’re putting on just in case while catching everything with manual growths is our real goal of avoiding that “crash”. However, how are you supposed to know when to grow a file or how much to grow it? Seeing how large your files are now doesn’t tell you how much they’ll grow in the next couple months, they could be static or growing exponentially.

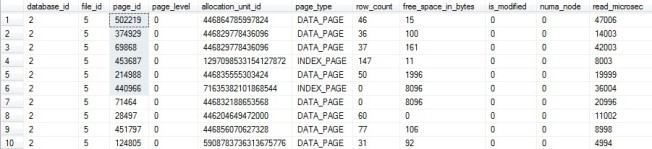

The partial answer is to say how much data was used before, how much data is being used now, and predict how much data will be used in the future using that trend. A better answer is to say what the peak usage was over a week and compare that to past weeks, which would account for things that cause spikes in file usage such as index rebuilds or data loads.

If you have monitoring software, it may have that answer just waiting for you to query it. That’s the situation I find myself in right now, and I wrote Database Growth Trends – Idera Diagnostic Manager talking about how I handle it right now. However, I used to work with monitoring software that pretty much only told me OS-level info, so we watched this data manually as was discussed in my post Monitoring Database and Table Sizes.

To be clear, many databases are fine with autogrowth handling everything. Do what’s best for you for each individual database. I personally have a mix of databases where some I’d be upset if autogrowth kicked in because it would cause timeouts that the app handles extremely poorly, and others, such as my DBA database, where a pause would only affect my collection jobs or a query I’m personally running. Autogrowth is not inherently evil, so only be paranoid where paranoia is called for.

Performing Manual Growths

Manually growing the file is just setting a new initial size. To do that in SSMS, right-click on a database, properties, files, then change the initial size to how large you want it to be.

I’m not a big fan of having SSMS do things for me, so I’ll use “Script Action to New Query Window” and run it form there. This will give me a script like this:

USE [master] GO ALTER DATABASE [DBA] MODIFY FILE ( NAME = N'DBA_Data', SIZE = 256000KB ) GO

One thing I wish I knew about years before I did is that the size in KB also accepts sizes in MB. I write just about everything in MB, so it’s more natural for me to write that script with Size = 250MB instead. I know I’m being petty, but I’m petty and comfortable.

This script could take a couple milliseconds or several seconds, even for 250MB. It all depends on if you have Instant File Initialization turned on and if you’re able to use it for this growth.

Instant File Initialization – What is it?

Short answer, this makes growths exponentially quicker by opening an often-acceptable small security hole.

Of course, I always have a long answer…

By default, all space used by SQL Server is zeroed out before control is handed over. This makes sure that it’s clean space for two basic reasons. First, the database requires that space to be clean because of how it plans to use it. Second, we don’t want to risk the possibility of special commands being able to pull back unencrypted sensitive data that used to be there.

Now the first reason we don’t have much control or say over at this point in the conversation. If you’re growing a log file then it needs to be zeroed out, and SQL Server will zero out each VLF when it’s marked for reuse, too. For data files, if you’re using Transparent Data Encryption (TDE) then the way SQL Server stores the data also requires the space to be zeroed out. These things are outside of the conversation on file growths, it’s just what we’ll have to deal with at this point and SQL Server won’t use IFI even if it’s turned on.

The one thing that will be pulled into this discussion is the security aspect of using uninitialized space. Kimberly Tripp demonstrates this the best in her post Instant Initialization – Why, Why, and How? in the “Why isn’t this on by default?” section.

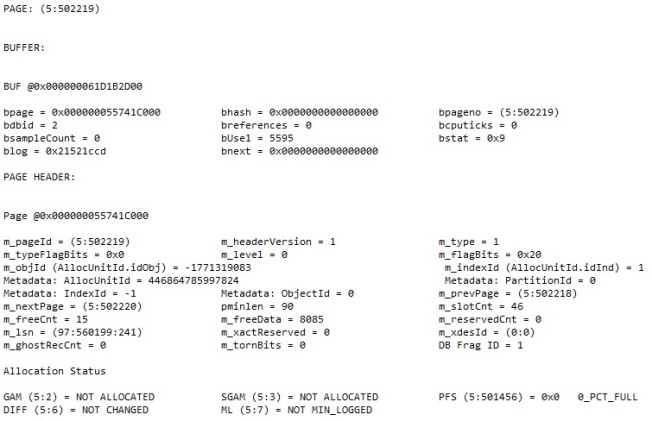

I’ll try to make it short and sweet if you don’t want to read yet another post (I recommend you read it). You can ask SQL Server to read specific pages off the disk. If SQL Server never actually used that page then it contains what was there when it was given to SQL Server. Zero initialized disks are very boring info, just “0000000000….”. Not much fun.

Uninitialized space is more fun (depending on your meaning of the word) and could contain old data. If you had unencrypted sensitive data sitting there before, it could contain enough to steal someone’s identity. You never know. These commands will only work if you have the permissions to run them, but who has those permissions and how much do you trust them? Most organizations say it’s fine, but some won’t.

Instant File Initialization – How do I use it?

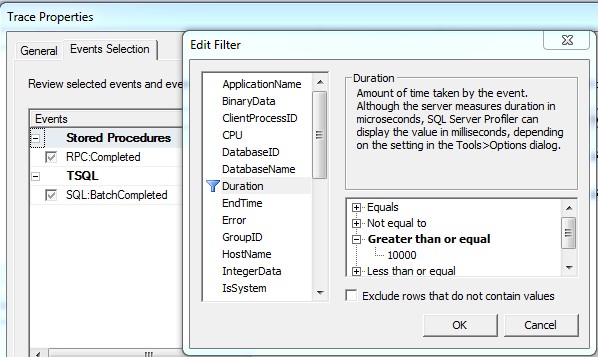

This is an odd feature that you can’t just see in a table in SQL Server. The easiest way is to run an xp_cmdshell command and look for it, but you can optionally turn on trace flags, grow or create a database, turn off the trace flags, then look in your error log.

Paul Randal gets into both methods in his post How to tell if you have instant file initialization enabled?. I don’t have any issues with xp_cmdshell, so I’ll let you go to Paul’s blog if your company insists on the more difficult method.

As for me, I’ll run the query below as a multiserver query in SSMS and look at the results. If that sounds foreign to you, check out my Multiserver Queries post, it’s a very simple and useful technique for specific tasks such as this one. In fact, I wrote that post so I could link to it in this post.

However, even if IFI is turned on, it’s only going to work for data files that aren’t encrypted with TDE. Logs and TDE data files need the zeros. Also, if any database on your instance is using TDE then so is TempDB, which means its data files can’t use IFI either.

CREATE TABLE #Output ( DataOut VarChar(2000) ) DECLARE @XP_CmdShell_Enabled int SELECT @XP_CmdShell_Enabled = CONVERT(INT, ISNULL(value, value_in_use)) FROM master.sys.configurations WHERE name = 'xp_cmdshell' IF @XP_CmdShell_Enabled = 0 BEGIN EXEC sp_configure 'show advanced options', 1 RECONFIGURE WITH OVERRIDE EXEC sp_configure 'xp_cmdshell', 1 RECONFIGURE WITH OVERRIDE END INSERT INTO #Output EXEC xp_cmdshell 'whoami /priv' IF @XP_CmdShell_Enabled = 0 BEGIN EXEC sp_configure 'xp_cmdshell', 0 RECONFIGURE WITH OVERRIDE END SELECT IFI_Enabled = COUNT(1) FROM #Output WHERE DataOut LIKE '%SeManageVolumePrivilege%Enabled%' DROP TABLE #Output

If IFI is turned off and it’s proper to turn it on for this server, here are your steps to turn it on:

- Open Administrative Tools / Local Security Policy

- Local Policies

- User Rights Assignment

- Open “Perform volume maintenance tasks”

- Add the user for the SQL Server service account

If IFI can’t be used, here are your steps (which are also good even if IFI is being used):

- Monitor database sizes for manual growths

- Grow files manually

The SQL Server service account, if you don’t know which account it is, can be found in Administrative Tools / Services / SQL Server (InstanceName) under “Log On As”. There are easier ways find the service account name in bulk if you’re doing this a lot, such as a slight variation of the query above.

xp_cmdshell Rant

Now, to be clear on xp_cmdshell which I used in the script above…. I do believe in security, but I don’t believe disabling this feature is the security you’re looking for. When it’s set up properly, disabling xp_cmdshell is like locking the door to your data center’s supply closet with a keyless lock instead of limiting access to the data center.

I do have issue with granting execute permissions on this to non-sysadmin accounts. I also have issue with granting sysadmin to anyone who asks, including vendors who insist that’s the only way their app will work. This means the only users that can use it are the very, very few with sysadmin. Also, any sysadmin can turn it on anyways, so the only extra security you get is a message in the error log saying it was turned on, but not even who did it.

My recommendation is that if you need it, leave it on. If you don’t, leave it off. If you have a one-time script that needs it, such as checking for IFI, turn it on just long enough to do what you need to do then automate turning it off. The script follows this recommendation; it’s left on if it was already on, but turns it off if it was off.

It’s time for me to admit I’m going off on a tangent, so I’ll pass you off to Sean McCown’s (b|t) Security Theater post if you’re too fired up to stop now.

In Summary

Autogrowth – Yes. Just as a fail-safe if performance is important to you. Limit the size to limit the performance impact, but don’t cause undue fragmentation.

Manual Growth – Yes. Trend how fast you’re growing so you know how to stay ahead of it.

Instant File Initialization – Usually. Understand the limitations and security risks, and turn it on if it’s the right thing to do.

Entry-Level Content Challenge

This is my fourth post in Tim Ford’s Entry Level Content Challenge. I went through much of my career with people saying to manually grow files, but it was left at that. I had no clue how to see how much space was used without clicking through the GUI, and passed it off as being ridiculous. Now I found ways to make it easy, and I’m betting there’s a lot of people out there doing too much work or skipping this simply for not knowing how.

Read over Tim’s challenge and consider joining in. It’s a great way to help people get past barriers you had in the past while learning how to clear them a little more efficiently yourself. Anyone who’s reading this post already knows how to clear some barriers that others are viewing as an impassible brick wall, so you can make a difference for them.