First, lets understand what the types of indexes are. If you take a reference book, you have the two types of indexes. The book itself is a clustered index, it contains all the data and has the key field of page number. The index in the back is your nonclustered index, it’s in order by the key field of subject, then has the implied second key field in there for page number, which nonclustered indexes in SQL Server have as well. On top of that, the table of contents at the beginning of the book is just like a b-tree built on every index that helps you get to the right page faster.

The key fields I mentioned are the fields everything is ordered by, just as you would expect a book to be ordered by page number. Nonclustered indexes also have implied key fields, which are all of the clustered index’s key fields that weren’t explicitly made key fields already, which is a great reason to keep your clustered indexes as narrow as possible in most cases, which means they have fewer columns.

If you have a phone book then you have a clustered index with the key fields last_name, first_name, address, phone_number. That means it’s ordered by each one, so any duplicate last names are still in order by first name, and so on. It’s wide for a clustered index, but doesn’t have any nonclustered indexes to worry about, so wide is fine here.

However, the important thing to notice is that if I asked you for people with the last name “Hood”, you’d find them rather quickly. If I asked you whose phone number is 412-555-6789, you’d cringe. The same thing happens in SQL Server where it will do the work you ask of it, but it won’t be cheap, quick and easy. This is why the internet has reverse phone number lookups where they have a nonclustered index on phone number which lets you find the name quickly by just knowing the number.

Indexes can also have included columns which are stored in the index, but no sorting is done on them. These fields don’t even exist in the b-tree for finding the information, only the page level where everything is stored. To simplify the process, this is where you put fields that you have in your SELECT clause but not your WHERE clause since don’t need it sorted to search quicker, you just need it to fulfill your query’s needs.

How are they used, and what do they cost?

A nonclustered index is used exactly the same way you read a reference book. You look up the key field in the back of the book and see what information is there. If you covered all the information that you needed just by looking at that index then you’re done. However, if you want to know more that wasn’t listed in the index then you take the key field of the clustered index (the page number) and do a key lookup by flipping to those pages and reading more about the subject at hand.

If you noticed, reading all that information in the back of the book seems pretty simple and efficient, especially when all of the information is there so you don’t have to touch the book itself. If you don’t have to touch the book then that’s called a covering index, which can be accomplished by adding the fields you want to retrieve to an index as either included or key columns. Depending on how many of these key lookups you have to do and how much extra information it has to get from the clustered index, it may make sense to make your index a covering index.

However, none of this is free. Every index you make has to be maintained. If you ignore filtered indexes, which I’ll get to soon, every time you insert or delete rows on a table then you have to write to each index. So if you have 6 nonclustered indexes, it doesn’t matter if you have a clustered index or a heap (merely a lack of a clustered index), you have to do one write to the table itself and one write to each nonclustered indexes, so 7 writes for that one row.

Add on top of that maintaining those indexes for fragmentation, extra storage needed on disks and backups, and more data that can possibly be held in the cache, and this stuff really starts to get expensive.

How many indexes should I have?

There’s no magic number of indexes you should have on any single table. Too few makes it hard to find the data, and too many makes a maintenance nightmare with data modifications taking longer. What indexes and how many will vary wildly, but there are methods to figure out what’s appropriate for each table. Chances are, many of your tables will benefit from both dropping indexes and creating them.

Consolidate what you have

First, look at the indexes themselves. If you have indexes that have the same first key field as the clustered index then you can consider removing those indexes with little to no detrimental effects. This is regardless as to if these indexes are being used or not.

Now, look at what indexes are never used. This can be done by looking at sys.dm_db_index_usage_stats to get you up to a month of data (assuming a monthly reboot for patching), or, my preferred method, by querying your tables you have set up that monitor this DMV. If an index is never being used it means it’s never helping you, but it is extra weight that’s adding up and slowing your database down. Just make sure you have enough data to say that the index really isn’t needed before relying on this alone.

Also, look for patterns in the indexes themselves by comparing the first couple key fields. If the couple key fields are the same, these are candidates for having the indexes combined into a single index. Depending on how quickly those key fields make the data semi-unique, you may be talking one to three key fields matching before I’m itching to reduce the number of indexes. You can do this by taking the columns that are in the second index that aren’t in the first and adding them to the first index as included columns, then drop the second index. Queries that used to hit either one of these indexes should run slower by an unnoticeable amount, yet you’re only storing data, doing updates, and maintaining a single index instead of two.

When you’re going through this process you just have to be careful that you understand the implementations of dropping a unique index. It is rarely a good idea to get rid of a unique index unless that uniqueness is enforced by other means as well.

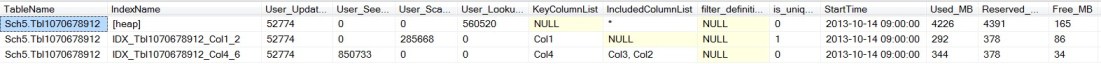

Take this example. I have a heap with a unique index on Col1 that gets scanned, but no seeks. Also, there’s a NC index on Col2 that has a lot of seeks then is obviously doing a lot of lookups to get more information from the heap. In this case I happen to know that the “unique” part of the index on Col1 is because that’s an identity column, so I’m not too concerned about maintaining the unique constraint here. After seeing the usage stats over a month I’m able to restructure this table. Both nonclustered indexes were dropped, a new unique clustered index was created on Col4 and Col1. User Lookups were eliminated since it’s all just a clustered index now, we saved about 700 MB on disk, and the compressed equivalent on backups as well.

Take another example where you can see that an index is duplicated. No, none of these are 100% the same, but the second index is the unique (is_unique field), clustered (* for included columns, it’s just my way of doing it) has a single key column on Col1, which is the same as the first nonclustered index. Seeing the usage like this, I’m going to drop the first index, saving 435 MB of space in my database. This will have a negative impact in that it will move 626 index scans done throughout the month to an index that’s twice the size, and that’s a problem I’ll revisit later when I’m tuning queries that have high physical reads.

Notice you can also see two issues on this table that I’ll need to look into. First, I have a nonclustered index that’s larger than my clustered index, something is wrong here. Perhaps I should have put the fill factor on here to show that wasn’t the issue. The real answer is that I’m working on a table that somehow got missed on the index maintenance, and I now know that this is possible and needs to be fixed. Another issue is that there is a filtered index on here that has about 0.1% fewer rows than the unfiltered indexes, which means that you’re getting no benefits while alienating queries that may have used this otherwise.

And one last example. The 2nd and 3rd indexes both have a single key field on Col2 and just vary on the included columns. This is a simple task of combining the two indexes by making one of them include Col1, Col2, Col6, Col7, Col8, and Col9, then dropping the other. That saves us about 200 MB on disk, and avoids us having duplicated data in the cache. Ask a SAN or backup admin and they’ll tell you the disk savings is great, but ask an unrealistically informed user and you’ll realize that cache savings is what makes a difference dropping your physical reads, upping your PLE, and making your app runs faster.

Then, there’s another simple task on this table of dropping the final index, which is nothing more than 112 MB of dead weight that was being updated an average of 30 times a day. Not much to say here, they obviously didn’t use this as much as they thought they would.

Finally, you’re left with the info that User_Lookups is almost as high as the total number of seeks and scans on the nonclustered indexes. This means that almost every query that hits the nonclustered indexes need to do a key lookup on the clustered index. Knowing that, if I was going to get to that level of tuning, I would be looking into the queries that hit this table and determine if I want to add more included columns to actually cover the query or admit that I’ll never cover it efficiently and remove some included columns. My answer here will vary partially depending on how many records are involved – key lookups are ok for 10 rows, but not so much for 1,000,000 rows.

None of these are hypothetical situations I forced AdventureWorks to do. This is a real, production database, and I wrote two queries against it. One to help me find these problems so I can put a change request in to fix them, and a second query that does the exact same thing while obfuscating the names to protect my employment. So when you see the duplicated indexes or the sizes I’m working with, you can’t say that would never happen in production….it just did.

You reduced the load, now add to it

Now that you have fewer indexes in your database, it’s time to add to them. There are several ways to figure out what indexes you want to add, and I prefer to do everything manually. That means there’s no DTA (Database Tuning Advisor) here, it’s all scripts with proof that what I’m doing is making a difference.

Where are you finding the queries to tune

There are a couple approaches to finding the queries in most need of attention. Query Stats will give you the queries that take the most resources during your peak times, which will reduce the load on your server the quickest. Traces on duration will give you the queries that take the longest along with sample calls with all the parameters you need. Blocking monitors will give you the queries other queries are waiting on the most. Then, one of my favorites, job shadowing gives you what the pain points are for the users.

The first thing I look at is blocking, since that silently sits in the background making queries that could run extremely fast sit there and wait for a single slow query. If there is a lot of consistent blocking then take the head blockers and start tuning them. This won’t eliminate the fact that they’re grabbing locks, but it will cause those locks to be released quicker. Locks by themselves are not an issue and shouldn’t be eliminated, but the impact the locks have on other queries are an issue that is best to be handled this way. If you don’t have many locks that go too long, you can skip this and revisit it later. I say “too long” instead of giving you a number because it varies a lot; 5 seconds is too long for a web app while a minute isn’t much for a data warehouse.

Once that is out of the way, I’ll start diving into the most expensive queries on the server. They may not be the ones with the longest average run-time, but they add up to the most on the server. If you can cut the reads from 100,000 to 1,000 on a proc called every second then you’re doing more for the server than you would by tuning an hourly query that does 10,000,000 reads. Also, focusing on physical reads will help raise your PLE if that’s a concern on your server, which it usually is. Don’t stick on this for too long, you’re just trying to get the easy tuning out of the way and hopefully change the look of your Top-10 most expensive queries list a bit.

Now I move on to looking at the traces to see if any huge queries are running that are easy tuning opportunities. Again, skim through it, pick up the low hanging fruit and move on. Right now you’re still in the phase of reducing the load on the server, but you may not have hit what the users see yet.

Another place to look is in the cache to find out what indexes are taking up the most space there. If you know your data well enough then you should know what to expect from your cache. What you see there will probably surprise you, and you’ll probably see an Index_id of 0 or 1 (Heap or Clustered Index) sitting there taking 10 times what you would expect it to do. These are cases where there are clustered index scans taking place that you need to find. I was recently able to clear up 12 GB of cache on a server doing a full index scan on a 12 GB table where a 10 MB filtered index was able to do the job, which made PLE almost double.

So, now that you have the server running a little (or a lot) better in general, I get into the work that never ends. Get a hold of the people using the app, either by yourself or asking customer service to arrange it, and find out what they hate the most about the app’s performance. What you need here is for someone to write a list of when anything took a while, what screen they were on, and approximately how long it took. Don’t go requesting anyone to use stopwatches, you’re looking for “a couple seconds”, “about a minute”, or “I click OK then go get a cup of coffee.” Your goal here is to be able to correlate their pain points to something captured in your traces, giving you the procs they’re using along with real-life example calls to dive into.

Something you may find odd is that I’m diving into the part that affects the end users the most last, but I do it on purpose. The other pieces are things I skim through and do what I can in a week or so, but they’ll make the bigger impact on the server in general along with resolving some of the customer’s complaints in the process. In the process, you’re learning more about the server and how everything goes together so that when you start talking to your customers you can make a more immediate impact on their complaints.

After getting through list once I let my ADD take over, and man do I have ADD. The customers have an idea of what a DBA can do for them now to the point that they may even be contacting you directly when something annoys them, developers realize a SQL Server specialist can be a good person to lean on, and your server’s biggest pain point hurts a lot less. Once you get to this point, jump around on this list on your servers and take up a query or two when you feel like it.

Related articles

- SQL Server Running Slow (simplesqlserver.com)

- Query Stats (simplesqlserver.com)

- Tracing Introduction (simplesqlserver.com)

- Fixing Page Life Expectancy (PLE) (simplesqlserver.com)

- Blocking – Capturing and Monitoring (simplesqlserver.com)

What query did you use to get the resulting table with the columns ‘TableName, IndexName, User_Updated,…’?

DECLARE @TableName VarChar(128) SET @TableName = '%' SELECT TableName = i.SchemaName + '.' + i.TableName , i.IndexName , i.index_id , i.User_Updates , i.User_Benefits , KeyColumnList = substring((SELECT (', ' + c.name) FROM sys.index_columns ic INNER JOIN sys.columns c ON ic.object_id = c.object_id AND ic.column_id = c.column_id WHERE i.object_id = ic.object_id AND i.index_id = ic.index_id AND ic.is_included_column = 0 ORDER BY ic.key_ordinal FOR XML PATH ('') ), 3, 2000) , IncludedColumnList = CASE WHEN i.IndexType = 'Clustered' THEN '*' ELSE substring((SELECT (', ' + c.name) FROM sys.index_columns ic INNER JOIN sys.columns c ON ic.object_id = c.object_id AND ic.column_id = c.column_id WHERE i.object_id = ic.object_id AND i.index_id = ic.index_id AND ic.is_included_column = 1 ORDER BY ic.key_ordinal FOR XML PATH ('') ), 3, 2000) END FROM ( SELECT TOP 1000 SchemaName = s.name , TableName = t.name , IndexName = i.name , IndexType = i.type_desc , i.object_id , i.index_id , User_Updates = SUM(ius.User_Updates) , User_Benefits = SUM(ius.user_seeks + ius.user_scans + ius.user_lookups) FROM sys.dm_db_Index_Usage_Stats ius INNER JOIN sys.indexes i ON ius.object_id = i.object_id AND ius.index_id = i.index_id INNER JOIN sys.tables t ON i.object_id = t.object_id INNER JOIN sys.schemas s ON t.schema_id = s.schema_id WHERE ius.database_id = DB_ID() AND t.name LIKE @TableName GROUP BY s.name, t.name, i.name, i.object_id, i.index_id, i.type_desc ) i ORDER BY /*schemaname, tablename,*/ KeyColumnList DESCThis script is very similar to what I used. I have two versions of this, and the one I used relies on having the data stored so you can see it long-term. This version will only go back as far as dm_db_Index_Usage_stats stores it, which is on server reboot, or for SQL 2012+, it also gets reset when you rebuild indexes.

Thanks Steve. The query you provided doesn’t show the size information (Used_MB, Reserved_MB, Free_MB and Row_Count) which is what I was really interested in. Can you supply the SQL to show that or point me towards where to find the info I want?

No problem. I should be able to get on my computer later today.

Sorry for the delay. There was less time on the computer over the holidays than expected. Here’s the exact query I use on a daily basis with the table in my DBA database commented out and replaced with sys.dm_db_index_usage_stats. As you can see, I’m not too creative with my column names (which is a good thing here).

There’s no need for the extra grouping I have here in a stand-alone version. However, when you capture this data on an hourly basis there is more than one row in my index usage table for each index. That’s my long way of telling you to ignore that this could be slightly more efficient.

Awesome, thanks for this. No need to apologize for the delay, I hope you had a good Thanksgiving.

Pingback: Indexing Strategy | Simple SQL Server

Pingback: Which should I use on my DB? - Clustered Index or Non Clustered Index or Both